Book a 30-minute demo and learn how Kula can help you hire faster and smarter with AI and automation

I’ve spent the last 5+ years knee-deep in recruiting technology...testing platforms, sitting through product demos, and working with hiring teams that rely on AI-powered tools to move faster.

One thing’s clear: AI is changing how we hire. Rapidly.

Resume screening, candidate assessments, interview scheduling…AI now can handle it all. But as companies lean harder on this tech, governments are stepping in to set the rules.

Laws are cropping up across the U.S., Europe, and beyond, forcing businesses to rethink how they use AI in hiring.

If you’re using AI to hire or thinking about it– you need to know these regulations. Non-compliance can mean legal headaches, fines of up to $1500 per violation (in the US), and even reputational damage.

Let’s break it all down.

Why are AI regulations necessary in recruiting?

AI is used in sourcing, scoring, and ranking candidates these days.

This sounds efficient until you realize the system might be unfairly screening out qualified candidates because they had a gap in their work history or owing to their gender/ race.

I’ve worked with hiring teams that discovered after months of using AI that their system was quietly favoring men over women for marketing roles. Another team found their screening tool penalized non-native English speakers.

Not because anyone set out to discriminate. But because, the data the AI was trained on reflected human biases.

Without regulations, these errors don’t just slip through. They scale.

We’re talking hundreds, maybe thousands, of candidates losing out on jobs. And companies missing out on great talent, all because no one checked the system.

Regulations are not anti-AI. They’re pro-fairness.

Regulations aren’t about slowing down progress. They’re about ensuring AI works how we want it to— efficient, yes, but also fair, transparent, and accountable.

In hiring, that means ensuring every candidate gets a fair shot regardless of their gender, race, or background.

"The goal shouldn't be to avoid AI out of fear of bias, but to build guardrails that keep systems ethical. When we design conversational tech, safety and human oversight have to be baked into the software from day one," says Stefan, founder of Clepher.

The legal map of AI in hiring: 10+ laws you should be aware of

Here's a comprehensive overview of key AI regulations affecting hiring practices worldwide:

United States: State-level regulations

1. Illinois: Artificial Intelligence Video Interview Act

The Artificial Intelligence Video Interview Act requires employers to notify candidates when AI is used to evaluate video interviews, obtain their consent, and ensure the deletion of interview recordings upon request.

2. New York City: Local Law 144 on Automated Employment Decision Tools (AEDTs)

Local Law 144 mandates that employers using automated employment decision tools conduct annual bias audits. Employers must also inform candidates about the use of such tools in the hiring process.

Non-compliance penalties:

Fines range from $500 for a first violation to $1,500 for subsequent violations. Each day of non-compliance is considered a separate offense.

3. Colorado: AI Act

The Colorado AI Act is the first broad AI accountability law in the U.S., regulating high-risk AI systems, including those used in hiring. It requires companies to evaluate the risks of AI systems and protect individuals from algorithmic discrimination.

Non-compliance penalties:

Specific fines are not yet detailed. Companies risk investigations, corrective actions, and potential legal penalties.

4. Maryland: Facial Recognition Law (HB 1202)

Maryland’s HB 1202 regulates the use of facial recognition technology in hiring, ensuring candidates are aware and consent before any biometric data is collected.

Non-compliance implications:

The law doesn't specify penalties, but it could expose employers to legal challenges and potential liabilities.

5. Utah: AI Policy Act

The Utah AI Policy Act focuses on transparency in generative AI usage across various sectors, including HR. It aims to ensure individuals know when they are interacting with AI.

Non-compliance penalties:

Businesses risk regulatory scrutiny and reputation damage for failure to disclose AI usage.

EU/ UK regulations

1. European Union: AI Act

EU AI Act is the world’s first comprehensive AI law, approved in March 2024, classifying AI systems used in hiring as “high-risk.” It mandates human oversight, transparency, and safeguards against bias in recruiting processes.

Non-compliance penalties:

Fines can reach up to €35 million or 7% of global turnover, whichever is higher, depending on the severity of the violation.

2. European Union: General Data Protection Regulation (GDPR)

The GDPR is Europe’s landmark data privacy law, effective since 2018, that regulates personal data processing, including AI-powered hiring systems. It limits fully automated hiring decisions and gives candidates the right to human review.

Non-compliance penalties:

Fines can reach up to €20 million or 4% of annual global turnover, whichever is higher.

3. United Kingdom: GDPR (UK Version)

Post-Brexit, the UK retained its version of GDPR, mirroring the EU’s standards for data privacy and automated decision-making in hiring.

Non-compliance penalties:

Fines can reach £17.5 million or 4% of annual global turnover, whichever is higher.

Canada: Artificial Intelligence and Data Act (AIDA)

The Artificial Intelligence and Data Act (AIDA) is Canada’s upcoming federal law aimed at regulating high-impact AI systems, including those used in hiring. It seeks to ensure transparency, fairness, and privacy protections when AI is involved in employment decisions.

Non-compliance penalties:

Fines can reach up to $10 million CAD or 3% of global revenue, whichever is higher, for serious violations.

Here’s how to future-proof your AI recruiting practices

1. Conduct bias audits for AI tools

AI systems can unintentionally introduce bias if they are trained on biased datasets. It's best to run independent monthly bias audits with a third-party tool for fairness.

AI models learn from historical data, and if that data contains biased patterns, audits reveal them and retrain models to correct unfair outcomes

Many regulations (such as New York City Local Law 144 & the European Union Artificial Intelligence Act) now require companies to regularly audit AI systems for discrimination.

2. Maintain human oversight in hiring decisions

AI should be used to speed up the process, but the final decision should always be made by human judgment.

The reason is simple: algorithms can scan and match skills in seconds but often miss nuanced context, such as career transitions, learning potential, or the reasons behind employment gaps.

For example, a hiring team should use AI for screening, scheduling, assessments, and shortlisting, but stakeholders should review the shortlisted candidates and evaluate their experience in context.

Maintaining human oversight also helps organizations avoid compliance and fairness risks.

3. Protect candidate data and privacy

AI hiring systems often process large amounts of candidate data. Compliance with regulations like GDPR requires organizations to handle this data responsibly.

Steps to follow include:

- Collect only the data necessary for the hiring process.

- Store candidate data securely and restrict access where necessary.

- Allow candidates to request deletion of their personal data.

- Conduct Data Protection Impact Assessments (DPIAs) before deploying high-risk AI systems.

Strong data protection practices reduce both legal and reputational risks.

4. Evaluate AI vendors carefully

If your organization uses third-party AI recruiting tools, including solutions built by AI development companies, compliance responsibilities still fall on your company. It’s important to assess vendors thoroughly before adopting their technology.

Ask vendors questions such as:

- Do you provide bias audit reports?

- How does your AI model prevent discriminatory outcomes?

- What transparency features exist for candidates?

- Are your systems compliant with GDPR, the EU AI Act, or local hiring regulations?

5. Document your compliance processes

While recruiters interact with AI tools daily, documentation and regulatory oversight are usually managed collaboratively across recruiting, HR operations, legal, and compliance teams.

Creating a structured process where recruiters focus on using AI responsibly, while compliance and HR teams maintain the required records and oversight.

A practical setup may look like this:

- HR operations or recruiting ops teams must document how AI tools are used in the hiring process.

- Legal or compliance teams store bias audit reports, risk assessments, and regulatory documentation.

- Vendor management or procurement teams maintain compliance documentation provided by AI vendors.

A quick AI recruiting compliance checklist for 2026

.png)

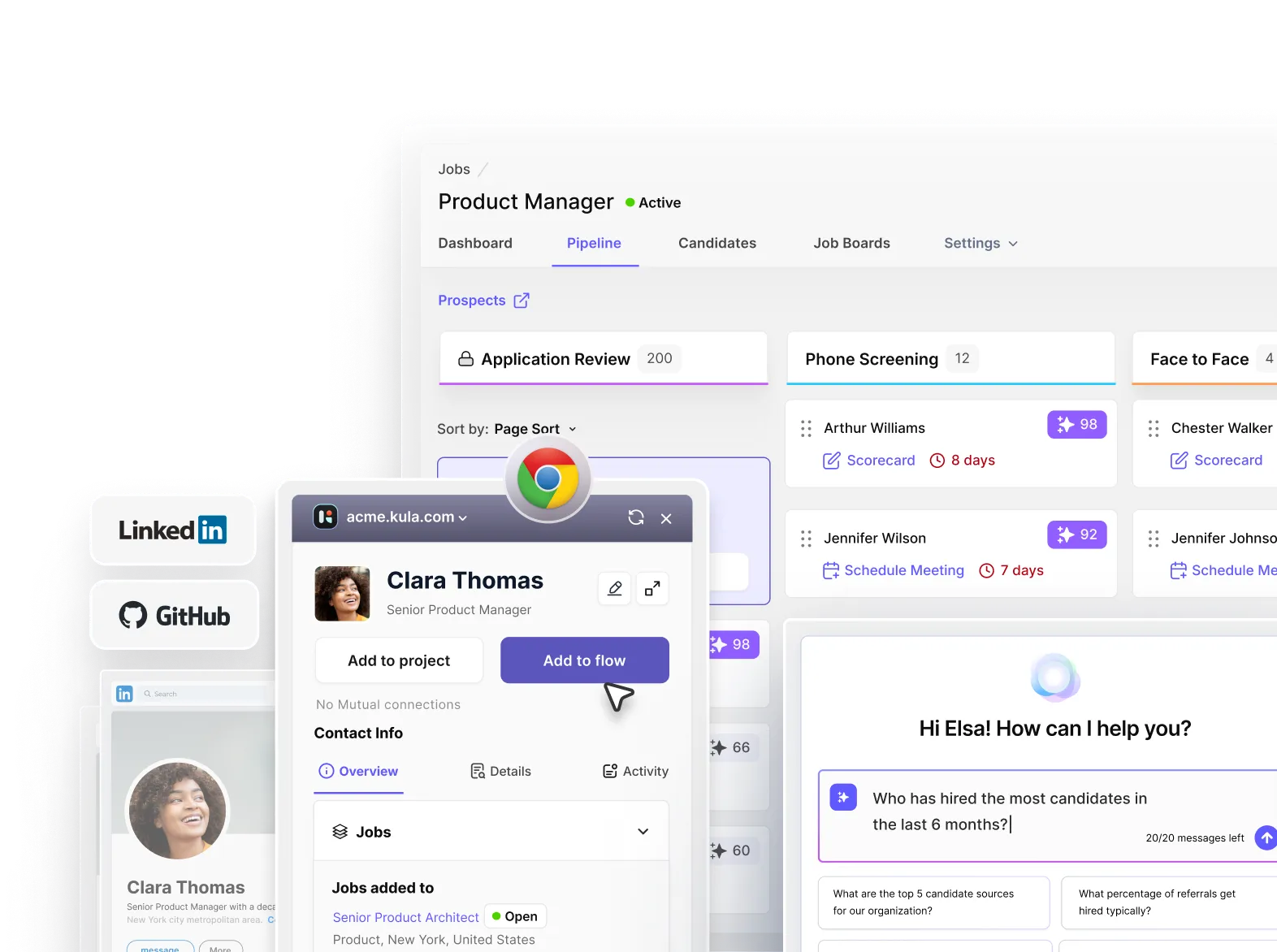

How AI regulation compliance looks with Kula

Kula has partnered with Warden AI, a trusted AI assurance platform, for its monthly independent AI bias audits. These audits help mitigate any potential bias and other risks.

Kula uses real-world data from Warden AI, not a synthetic dataset. This organic data helps Kula users get more accurate and relevant results.

Kula is an AI-native platform that uses advanced AI for scoring applications and assigns contextual attribute-based scores with insights to help identify the best-fit profiles faster.

To ensure equitable outcomes with AI scoring, Kula conducted a rigorous examination using two scientific methods:

- Disparate Impact Analysis: This method detects whether an AI system disproportionately impacts specific demographic groups. The audit confirmed no bias related to sex or ethnicity in Kula’s AI.

- Counterfactual Analysis: This approach evaluates whether decisions change when attributes like sex or ethnicity are altered, and results showed Kula’s AI maintained fairness in 98–100% of cases.

Kula AI is also fully compliant with NYC Local Law 144 and is on track to meet the EU AI Act’s stringent requirements (effective August 2026).

You can review Kula’s latest bias audit results through its public dashboard.